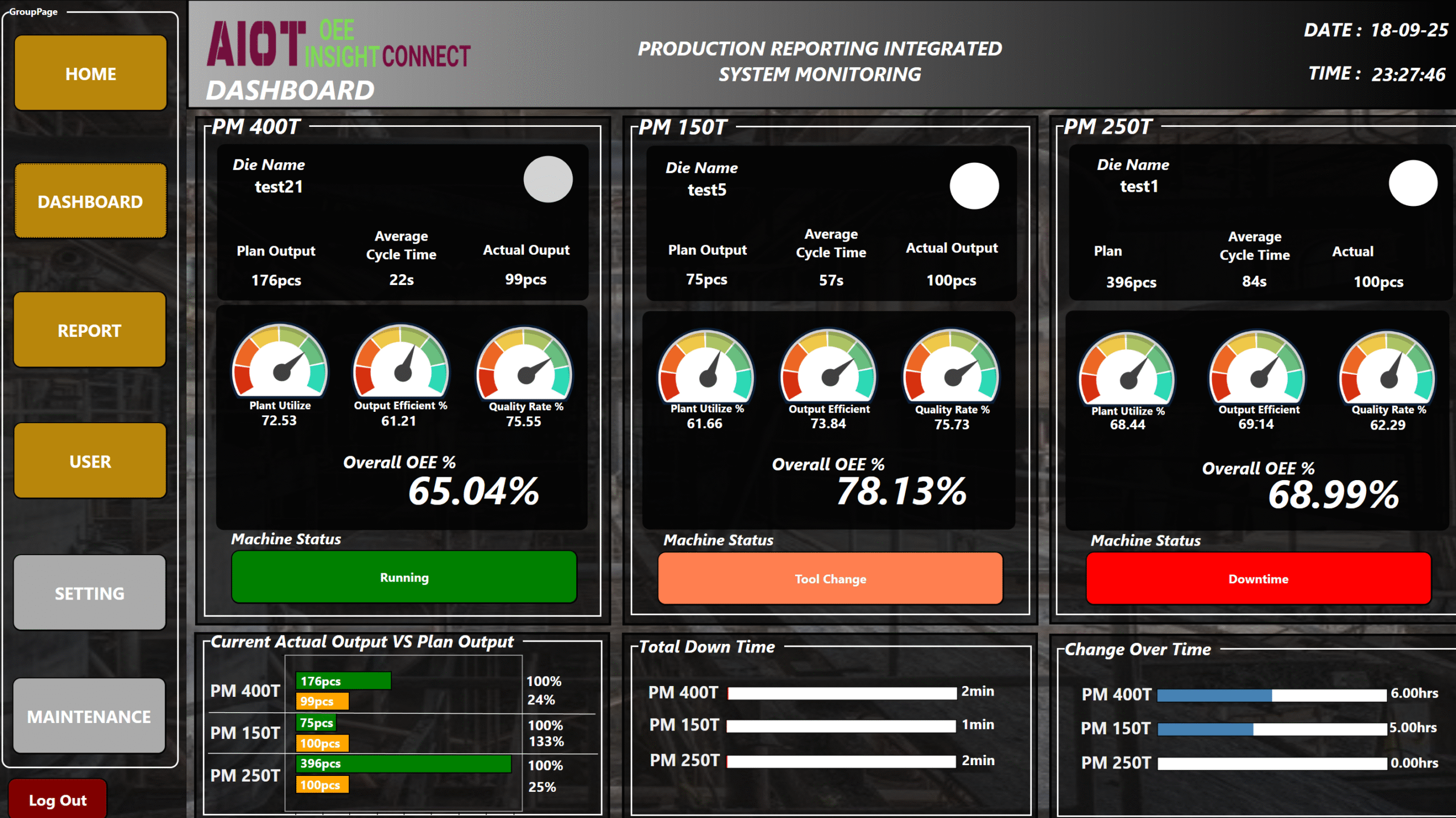

OEE is Useless?

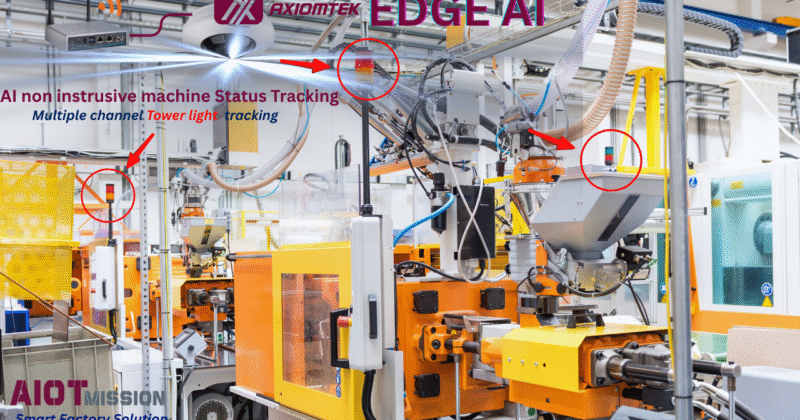

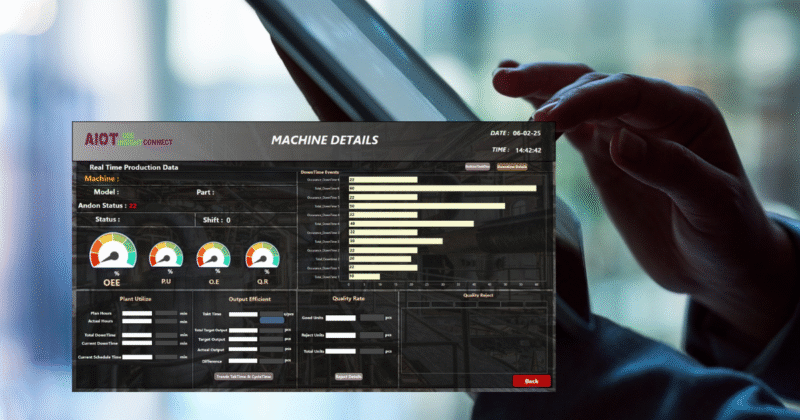

Is having OEE useless? Overall Equipment Effectiveness (OEE) has long been a standard metric in manufacturing to evaluate how well a process is running—based on Availability, Performance, and Quality. A high OEE typically signals an efficient operation. But here's the catch:OEE is a rearview mirror 😊 great for knowing what happened, but useless unless you can act on it.Today, with Industry 4.0 taking root and SMEs pushing for smart manufacturing transformation, collecting OEE data is no longer the challenge. The real value lies in what you do with that data.So how do you turn OEE into a forward-looking, action-driven tool?3 Steps to Make OEE Truly Useful:Get Insightful Data. Don’t just chase the OEE number. Break it down. Understand the underlying variables and their impact.Track the 6 Big Losses - Identify leakages in productivity. (If you missed our earlier post on this, you can find in my last posting!)Act with Purpose - Use data-driven insights to implement continuous improvement plans -targeted, trackable,...